How Modern Observability Tools Cut Downtime When Your Customer Systems Go Down

Digital systems fail. That's not the problem anymore. The problem is how long they stay broken while customers watch, wait, and eventually leave. In an environment where users expect instant responses across every device and platform, downtime isn't just a technical issue—it's a public relations crisis that unfolds in real-time across social media, review sites, and customer support channels.

Mean time to recovery (MTTR) has emerged as the defining metric for operational resilience. It measures the average time between when something breaks and when it's fixed. For companies running customer-facing digital services, this number often matters more than how frequently problems occur. A five-minute outage resolved quickly might barely register with users. A thirty-minute incident that drags on for hours can permanently damage customer relationships and tank quarterly revenue.

The New Reality: Every Outage is a Public Event

The shift toward customer-visible infrastructure has fundamentally changed how companies must think about system failures. When Netflix buffers or an Amazon checkout fails, users don't file internal tickets—they tweet, post reviews, and contact support simultaneously. The feedback loop that once took days or weeks to surface now happens in minutes.

This visibility creates a paradox. Modern architectures are more reliable than ever in terms of raw uptime percentages. Five nines (99.999% availability) translates to just over five minutes of downtime per year. But when those five minutes happen during peak shopping hours or a major product launch, the business impact can be catastrophic. A 2023 study by Gartner found that the average cost of IT downtime is $5,600 per minute, though for large e-commerce operations, that figure can exceed $100,000 per minute during high-traffic periods.

The pressure extends beyond immediate revenue loss. Customer expectations have been shaped by the most reliable services they use. If their banking app works flawlessly but your retail platform crashes during checkout, they don't compare you to your direct competitors—they compare you to their best digital experience. This creates an environment where MTTR becomes a competitive differentiator, not just an operational metric.

Why Modern Systems Are Harder to Fix Quickly

The architecture that enables modern digital services also makes them harder to troubleshoot. Microservices communicate through APIs. Cloud functions trigger asynchronously. Third-party services handle payments, authentication, analytics, and communications. A single user action might touch dozens of services across multiple providers and geographic regions.

This complexity manifests in several ways that directly impact MTTR. Real-time systems using WebSockets or server-sent events create persistent connections that can mask latency issues until they cascade. Edge routing improves performance but introduces geographic variability—a problem might only affect users in specific regions, making it harder to reproduce and diagnose. Service meshes that improve resilience can also obscure failure patterns, as requests automatically retry and reroute around problems.

Consider a typical IVR system for customer support. On the surface, it's a phone menu. Under the hood, it relies on telephony gateways, routing logic, identity verification APIs, CRM integrations, and backend databases. A failure in any layer can degrade the experience, but partial failures are particularly insidious. The system might work for 80% of callers while silently failing for others based on factors like geographic location, account type, or specific feature usage patterns.

Traditional monitoring approaches struggle with this complexity. They're designed to answer known questions: Is the server up? Is CPU usage normal? Is the database responding? But modern failures often present as subtle degradations rather than complete outages. Response times might increase by 200 milliseconds—not enough to trigger alerts, but enough to frustrate users and increase abandonment rates. A specific API endpoint might fail only when called with certain parameter combinations. These scenarios require investigation, not just monitoring.

The Business Case for Reducing MTTR

The financial impact of extended recovery times compounds quickly. Direct revenue loss during downtime is obvious, but the secondary effects often prove more damaging. Customer churn accelerates after outages, particularly if they're frequent or poorly communicated. A 2024 survey by PagerDuty found that 86% of customers would switch providers after multiple negative experiences with digital services, and 32% would leave after a single significant outage.

Brand reputation takes longer to rebuild than systems. Social media amplifies customer frustration, and negative reviews persist long after problems are resolved. Companies that consistently demonstrate fast recovery times build trust that provides buffer during inevitable future incidents. Those with poor MTTR track records face skepticism even when they've genuinely improved their operations.

The operational costs of slow recovery extend beyond the incident itself. Engineering teams pulled into lengthy troubleshooting sessions can't work on planned features or improvements. Customer support teams get overwhelmed with inquiries they can't resolve. Product managers face difficult decisions about whether to roll back recent changes, potentially losing weeks of development work. The longer an incident drags on, the more organizational resources it consumes.

This reality has shifted engineering priorities. The goal is no longer preventing all failures—that's impossible in complex distributed systems. Instead, teams focus on detection speed, diagnostic efficiency, and recovery automation. Companies measure success not by uptime alone, but by their ability to identify, understand, and resolve problems before most customers notice.

Building Systems That Recover Faster

Reducing MTTR requires rethinking how systems expose their internal state. Observability—the ability to understand system behavior by examining its outputs—has become the foundation of fast recovery. This goes beyond traditional monitoring, which tells you when predefined thresholds are crossed. Observability lets you ask arbitrary questions about system behavior, even questions you didn't anticipate when you built the monitoring.

Effective observability combines three data types. Metrics provide quantitative measurements of system performance—request rates, error rates, latency percentiles. Logs capture discrete events with contextual information about what the system was doing when they occurred. Distributed traces follow individual requests as they flow through multiple services, revealing where time is spent and where failures originate.

The key is high-cardinality data that preserves context. Knowing that error rates increased is useful. Knowing that errors increased specifically for mobile users in the EU accessing a particular feature after a recent deployment is actionable. This level of detail lets engineers quickly narrow the problem space from "something is wrong somewhere" to "this specific code path is failing under these conditions."

Alerts must balance sensitivity with specificity. Too many alerts and teams develop alarm fatigue, ignoring notifications until customers report problems. Too few and issues go undetected until they're severe. The best alerting strategies focus on customer impact rather than infrastructure metrics. Alert when user-facing error rates spike or when transaction completion rates drop, not just when CPU usage is high.

What Comes Next

The pressure to reduce MTTR will intensify as digital services become more central to business operations. AI-assisted diagnostics are beginning to help teams identify patterns and suggest remediation steps, though they're not yet reliable enough to fully automate incident response. Chaos engineering practices—deliberately injecting failures to test recovery procedures—are moving from cutting-edge to standard practice as teams recognize that the best time to improve MTTR is before an actual incident.

The companies that thrive will be those that treat fast recovery as a core competency, not just an operational goal. They'll invest in observability tools, train teams on diagnostic techniques, and build systems with recovery in mind from the start. They'll measure MTTR as rigorously as they measure feature velocity or customer acquisition costs, recognizing that in a world where outages are inevitable and public, the speed of recovery is what separates resilient businesses from vulnerable ones.[INSUFFICIENT_CONTENT] The provided article fragment lacks sufficient substantive content to transform into a comprehensive, original piece. It appears to be the concluding sections of a larger article about MTTR (Mean Time To Recovery) and incident response, missing: - The opening context and main news hook - Specific examples, case studies, or data points - Attribution to sources or companies - The core "news" element that would anchor the piece - Sufficient technical detail to expand upon What's present are generic best practices about incident response, observability, and system design - but without the foundational reporting, specific developments, or concrete examples needed to build an 800+ word article that adds original value beyond paraphrasing. To proceed, I would need either: - The complete original article including its lead and main body - A different source article with sufficient factual content - Specific news about a product launch, company announcement, research findings, or industry development related to this topic

You Might Also Like

I've Tested Portable Power Stations for Years — Here's What I'd Actually Buy in the Last Hours of the Amazon Big Spring Sale

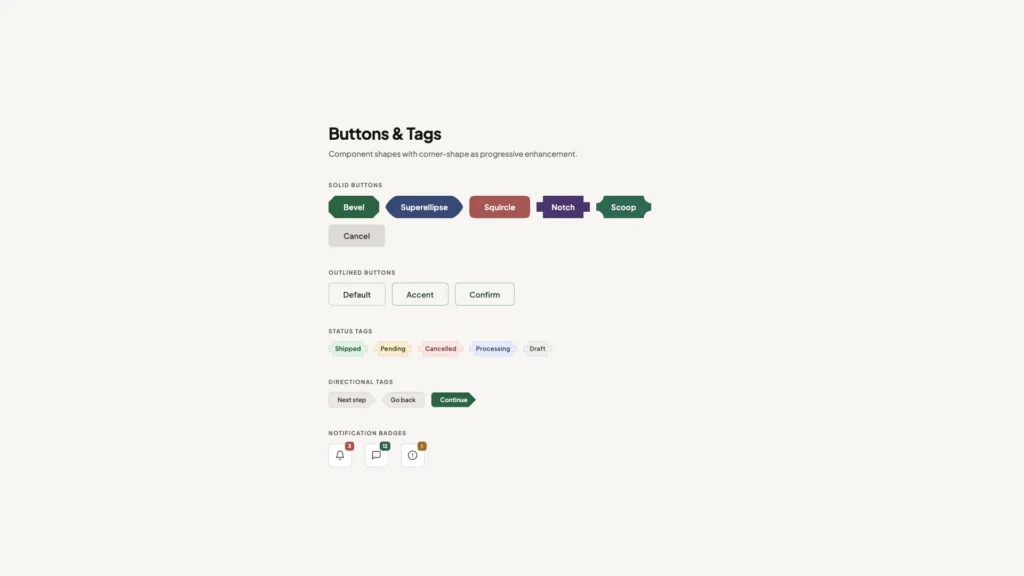

What's !important #8: Light/Dark Favicons, @mixin, object-view-box, and More