5 Python Scripts That Streamline Your Feature Selection Workflow

Why Feature Selection Remains Machine Learning's Most Underestimated Bottleneck

Feature selection sits at an awkward intersection in the machine learning workflow. It's too important to skip, yet too tedious to do well. Most practitioners know they should remove redundant variables and identify meaningful predictors, but the process quickly becomes unwieldy once datasets grow beyond a few dozen features.

The problem compounds with scale. A dataset with 200 engineered features generates nearly 20,000 pairwise correlations to evaluate. Each feature needs variance analysis, statistical testing, and importance scoring across multiple models. Manual approaches break down, and ad-hoc scripts accumulate technical debt. What's needed is a systematic, reusable framework that handles the mechanics while preserving analytical judgment.

Five Python scripts address the most common feature selection challenges: variance filtering, correlation analysis, statistical significance testing, model-based importance ranking, and recursive feature elimination. Each automates a specific technique while maintaining transparency about what gets removed and why. The scripts are available on GitHub and designed to work independently or as part of a sequential pipeline.

Variance Thresholds: Removing Features That Don't Vary

Features with near-zero variance contribute nothing to prediction. A column that's constant across all samples cannot distinguish between target classes. Yet identifying these features manually requires calculating variance for every column, normalizing across different scales, and handling binary features differently from continuous ones.

The variance threshold script automates this process by computing variance for each feature and applying type-appropriate strategies. For continuous features, it calculates standard variance and optionally normalizes by the feature's range, making thresholds comparable across different scales. Binary features receive special treatment since their variance relates directly to class imbalance—the script calculates the proportion of the minority class rather than raw variance.

Features falling below the configured threshold get flagged for removal. The script maintains a detailed log showing which features were eliminated and their variance scores, providing transparency for later review. This matters because variance thresholds require judgment—a threshold of 0.01 might be appropriate for normalized features but too aggressive for raw counts.

When Low Variance Doesn't Mean Low Value

Variance filtering has an important limitation: it ignores the relationship between features and the target. A feature with low variance might still be highly predictive if its rare values strongly correlate with specific target classes. Binary features representing rare events exemplify this—a fraud indicator that's true for only 2% of transactions has low variance but potentially high predictive value.

The script addresses this by allowing separate thresholds for binary and continuous features. It also generates reports showing the distribution of removed features, helping practitioners spot cases where domain knowledge should override statistical criteria. Variance filtering works best as a first-pass filter, removing obviously uninformative features before applying more sophisticated selection methods.

Correlation Analysis: Deciding Which Redundant Features to Keep

Highly correlated features create two problems. They add dimensionality without adding information, and they cause multicollinearity issues in linear models. When two features correlate at 0.95, keeping both inflates model complexity while providing minimal additional signal.

The challenge isn't detecting correlation—it's deciding which feature to keep. With 200 features, there might be dozens of correlated pairs, some forming chains where feature A correlates with B, which correlates with C. Manually resolving these dependencies while maximizing predictive power requires systematic analysis.

The correlation selector script handles both numerical and categorical features. For numerical features, it computes Pearson correlation. For categorical features, it uses Cramér's V, a measure of association between categorical variables that ranges from 0 (no association) to 1 (perfect association). When a pair exceeds the correlation threshold, the script compares each feature's correlation with the target variable and removes the one with weaker target correlation.

This process runs iteratively to handle correlation chains. If features A, B, and C are mutually correlated, the script resolves them in sequence, ensuring the final selection maximizes target correlation. It generates correlation heatmaps showing clusters of related features and detailed reports explaining each removal decision.

The Multicollinearity Trade-off

Correlation thresholds require balancing redundancy against information loss. A threshold of 0.95 removes only near-duplicates, while 0.70 aggressively eliminates features that might capture different aspects of the underlying phenomenon. Linear models benefit from stricter thresholds since multicollinearity inflates coefficient variance. Tree-based models tolerate correlation better but still suffer from increased training time and reduced interpretability.

The script's target-aware selection strategy helps preserve predictive power, but it can't capture interaction effects. Two features might be individually weak but jointly strong. Correlation analysis works best when combined with model-based importance scoring that can detect these interactions.

Statistical Significance: Testing Feature-Target Relationships

Not every feature has a meaningful relationship with the target. Features showing no statistical association add noise and increase overfitting risk. But testing each feature properly requires choosing the right statistical test, computing p-values, correcting for multiple comparisons, and interpreting results correctly.

The statistical test selector automates test selection based on feature and target types. For numerical features predicting a categorical target, it applies ANOVA F-tests to check whether feature means differ significantly across target classes. Categorical features get chi-square tests for independence. The script also computes mutual information scores to capture non-linear relationships that standard tests might miss. When the target is continuous, it switches to regression F-tests.

Multiple testing correction is critical here. Testing 200 features at a 0.05 significance level means expecting 10 false positives by chance alone. The script applies either Bonferroni correction—multiplying each p-value by the number of features—or False Discovery Rate correction, which is less conservative and more appropriate for exploratory analysis. Features with adjusted p-values below 0.05 are flagged as statistically significant.

Where Standard Tests Break Down

ANOVA assumes approximate normality and equal variances across groups. For heavily skewed features, these assumptions fail, and the test becomes unreliable. The Kruskal-Wallis test provides a non-parametric alternative that makes no distributional assumptions. Similarly, chi-square tests require expected cell frequencies of at least 5. High-cardinality categorical features often violate this, making Fisher's exact test a safer choice.

Mutual information scores present a different challenge. They're not p-values and don't fit naturally into multiple testing correction frameworks. A cleaner approach treats them as a separate ranking signal rather than merging them with significance tests. This allows practitioners to identify features with strong non-linear relationships that might fail traditional tests.

Statistical significance also doesn't equal practical importance. A feature can be statistically significant but have a tiny effect size, contributing little to actual prediction accuracy. Pairing p-values with effect size measures—like Cohen's d for continuous features or Cramér's V for categorical ones—provides a more complete picture of which features matter.

Model-Based Importance: Learning What Models Actually Use

Model-based feature importance reveals which features contribute to prediction accuracy. Unlike statistical tests that examine features in isolation, importance scores reflect how features perform within the model's decision-making process. But different models produce different importance scores, and combining them into a coherent ranking requires careful normalization.

The model-based importance script trains multiple model types—typically including tree-based models like Random Forest and gradient boosting, plus linear models—and extracts importance scores from each. Tree-based models provide built-in importance through split counts and information gain. Linear models use coefficient magnitudes. The script normalizes these scores to make them comparable across models, then computes ensemble importance by averaging or ranking.

Permutation importance offers a model-agnostic alternative. It works by randomly shuffling each feature and measuring the resulting drop in model performance. Features causing large performance drops are important; those causing minimal drops are not. This approach works with any model type and captures feature importance as the model actually uses it, not as the model's internal structure suggests.

Why Importance Scores Diverge

Different models emphasize different feature characteristics. Tree-based models favor features with clear split points and interactions. Linear models favor features with strong marginal correlations. A feature might rank highly in one model and poorly in another, not because of inconsistency but because the models exploit different aspects of the data.

Ensemble importance averaging smooths these differences but can obscure important signals. If four models rank a feature low but one ranks it high, averaging might dismiss it—yet that one model might be capturing a real interaction the others miss. The script addresses this by providing both averaged importance and per-model rankings, allowing practitioners to investigate features with high variance across models.

Building a Feature Selection Pipeline

These scripts work independently but gain power when combined into a sequential pipeline. A typical workflow starts with variance filtering to remove obviously uninformative features, then applies correlation analysis to eliminate redundancy. Statistical testing identifies features with significant target relationships, and model-based importance provides a final ranking based on actual predictive contribution.

Each stage reduces the feature space while preserving different aspects of information. Variance filtering removes noise. Correlation analysis removes redundancy. Statistical testing removes features with weak target relationships. Model-based importance ranks what remains by predictive value. The result is a compact feature set that maintains predictive power while reducing dimensionality.

The key is maintaining transparency throughout. Each script generates detailed reports showing what was removed and why. This documentation proves essential when model performance changes unexpectedly or when domain experts question why specific features were excluded. Feature selection isn't just about automation—it's about creating an auditable process that combines statistical rigor with domain judgment.

[INSUFFICIENT_CONTENT] The provided content appears to be fragments from the middle and end of a longer article about Python feature selection scripts. It lacks: 1. A clear beginning or introduction that establishes the full context 2. Complete explanations of the first 4 scripts (only partial content for script #5 is shown) 3. Sufficient substantive information to build an 800+ word independent article with meaningful analysis The fragments show: - Partial technical descriptions of model-based selection and recursive feature elimination - A summary table of 5 scripts (but full details for only 1-2 are provided) - Author bio and related links Without the complete source article including all 5 scripts with their full explanations, pain points, and implementation details, I cannot produce a high-quality journalistic piece that meets the 800-word minimum and adds 30% original analytical content. The available material would only support a 300-400 word summary at best. To proceed, I would need the full original article including the first 4 scripts' complete sections.You Might Also Like

I've Tested Portable Power Stations for Years — Here's What I'd Actually Buy in the Last Hours of the Amazon Big Spring Sale

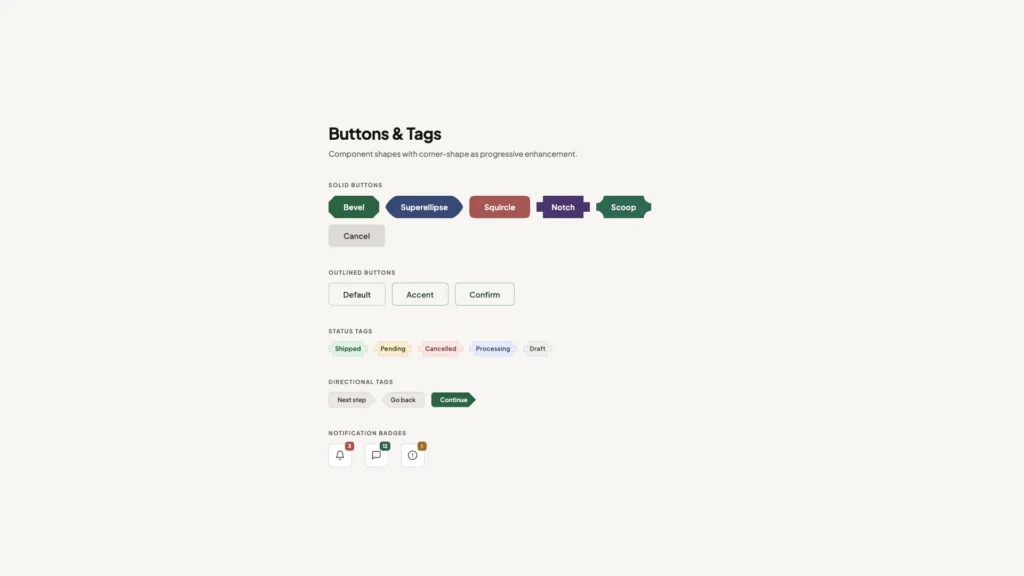

What's !important #8: Light/Dark Favicons, @mixin, object-view-box, and More